We will soon be sending a survey for sign-ups. Stay tuned!

The Seminar

Just another seminar?

The PhD Pizza Seminar, or Junior Seminar (« séminaire jeunes chercheurs ») allows Inria PhD students / interns / post-docs to present their work. The talks are meant to be easily understandable, so that anyone can attend.

The Junior Seminar is a good opportunity to discover what the other teams are up to: if you ever wondered what's happening in other Inria teams, or if you want to meet other PhD students, this seminar is made for you!

Where? When?

The seminar is scheduled once a month, with a break during the summer. The seminar takes place in the Jacques-Louis Lions amphitheatre near the reception, usually at 11.30am.

Format

The seminar is held in English to make sure everyone can attend. There is a 30-minute talk, followed by a 5 minute session for questions.

We then share together pizza gifted by Inria.

I want to speak! I have a student who should speak!

Send us an email at semdoc-request@inria.fr, and we'll make sure you can give a talk :).

Who are we?

Currently in charge of the seminar:

Jakob Maier +

Remy Seassau

Formerly in charge of the seminar:

Roland Andrews +

Alexandre Moine +

Gaspard Beugnot +

Clémence Bouvier +

Théophile Cantelobre +

Denis Merigoux +

Éloïse Berthier +

Alix Chagué +

Armaël Guéneau +

Marie Puren +

Léa Boittin +

Patrik Daniel +

Matteo Aletti +

Georgios

Bouloukakis +

Mathieu Feuillet +

Emanuele Leoncini +

Jonathan Protzenko +

Elisa Schenone +

Pauline Traynard +

Jacques-Henri Jourdan.

We're always looking for help, so if you want to help us find new victims and organize rehearsals, do contact us!

Past Talks

Here are the archives of the Junior Seminar, with the abstracts, the slides and the videos of the past presentations.

|

May 26th, 2026 |

|

Abstract |

|

April 8th, 2026 |

|

Relaxed Stein Variational Gradient Descent: An Accelerated Sampling Method for Bayesian Inverse Problems Speaker: Corrie JamesTeam: COMMEDIA |

Abstract |

|

March 11th, 2026 |

|

Abstract |

|

February 18th, 2026 |

|

Keeping the Lights On: High-Dimensional Stochastic Control for Power Systems Speaker: Eliste DeveyTeam: MATHRISK |

Abstract |

|

February 3rd, 2026 |

|

Abstract |

|

December 17th, 2025 |

|

Compressing quantum information: How tensor networks can be useful for desigining superconducting quantum computers Speaker: Emilio RuiTeam: QUANTIC |

Abstract |

|

November 4th, 2025 |

|

Trust Your Probabilities: A Beginner's Guide to Classifier Calibration Speaker: Eugène BertaTeam: SIERRA |

Abstract |

|

May 13th, 2025 |

|

Abstract |

|

April 8th, 2025 |

|

Dissecting insect behavior with self-supervised learning and kernel two-sample tests Speaker: Alexandre BlancTeam: EPIMETHEE |

Abstract |

|

March 11th, 2025 |

|

Termination resilience static analysis by abstract interpretation - How do external-inputs impact termination of programs? Speaker: Naïm Moussaoui RemilTeam: ANTIQUE |

Abstract |

|

February 11th, 2025 |

|

Tabular Data: The Last Bastion of Classical Machine Learning? Speaker: David HolzmüllerTeam: SIERRA Slides: [pdf] |

Abstract |

|

December 10th, 2024 |

|

Finding Meaning: Semantics and Verification in Programming Languages Speaker: Remy SeassauTeam: CAMBIUM Slides: [pdf] |

Abstract |

|

November 12th, 2024 |

|

How to select the best pizza? or Model selection for Time Series Anomaly Detection Speaker: Emmanouil SylligardosTeam: VALDA |

Abstract |

|

October 8th, 2024 |

|

Abstract |

|

September 10th, 2024 |

|

Anomaly detection in neuroimaging – designing computer-aided diagnosis tools for brain disorders. Speaker: Maelys SolalTeam: ARAMIS - Paris Brain Insitute Slides: [pdf] |

Abstract |

|

May 14th, 2024 |

|

Will my table be large enough for so many ingredients? Or: Formal verification of heap space bounds. Speaker: Alexandre MoineTeam: CAMBIUM Slides: [pdf] |

Abstract |

|

April 9th, 2024 |

|

The Poisson problem: How can a simple equation be so complicated to solve efficiently? Speaker: Clément MaradeiTeam: SERENA Slides: [pdf] |

Abstract |

|

March 12th, 2024 |

|

Can we find the shortest path, without a map? Is maze-solving parallelizable? Speaker: Romain CossonTeam: ARGO Slides: [pdf] |

Abstract |

|

February 13th, 2024 |

|

|

November 15th, 2022 |

|

Modularity in programming languages, the example of OCaml Speaker: Clément BlaudeauTeam: CAMBIUM Slides: [pdf] |

Abstract |

|

Understanding cryptographic assumptions using the CSSA logic Speaker: Justine SauvageTeam: PROSECCO |

Abstract |

|

October 18th, 2022 |

|

Mathematical models of allocation of resources in a bacterium Speaker: Jana ZaherddineTeam: MAMBA Slides: [pdf] |

Abstract |

|

Abstract |

|

September 20th, 2022 |

|

Abstract |

|

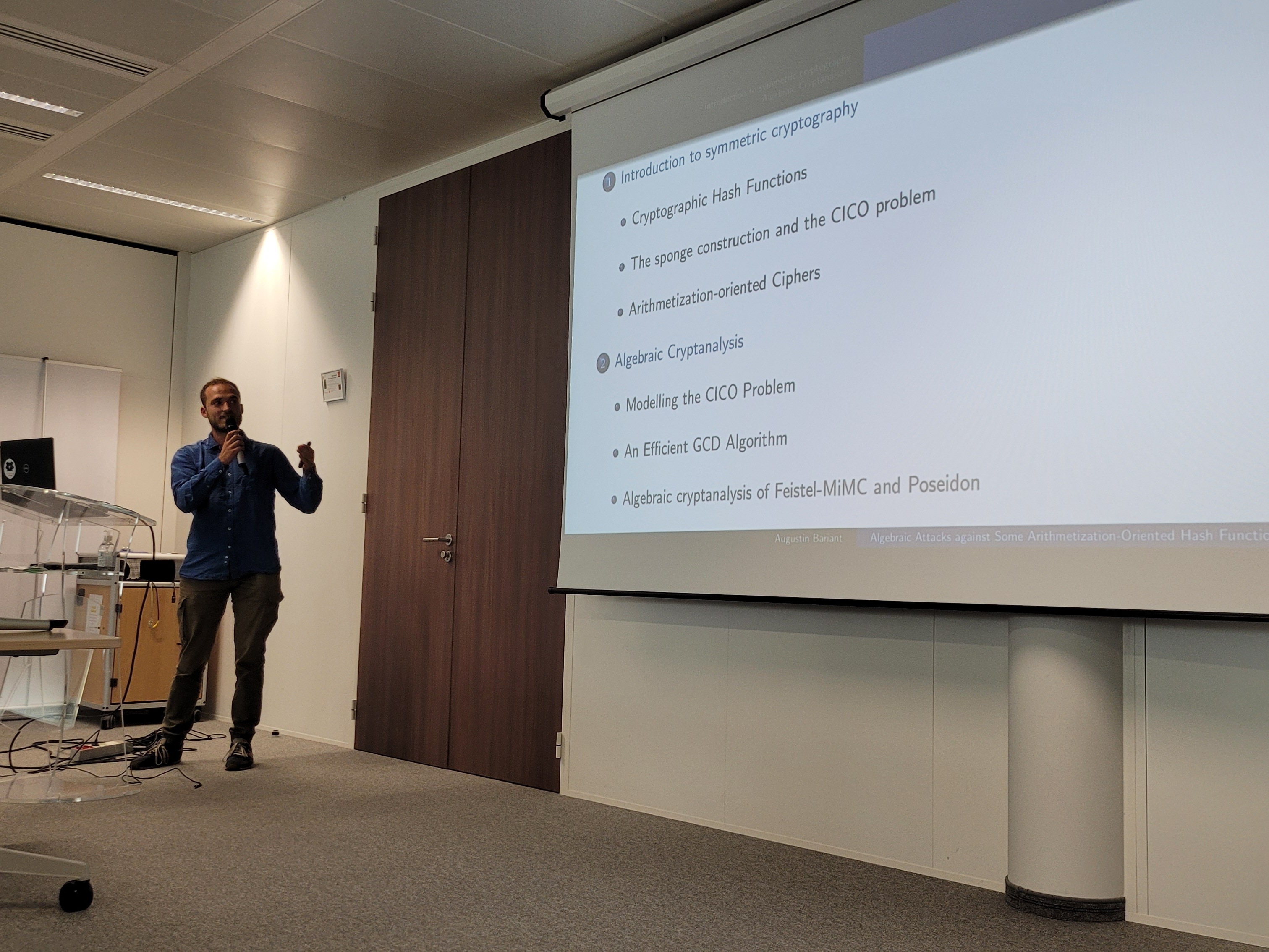

Algebraic Attacks against Some Arithmetization-Oriented Hash Functions Speaker: Augustin BariantTeam: COSMIQ Slides: [pdf] |

Abstract |

|

June 21st, 2022 |

|

Reinforcement learning from the basics: a tale of learning and control Speaker: Eloïse BerthierTeam: SIERRA Slides: [pdf] |

Abstract |

|

May 17th, 2022 |

|

Assembly Planning from Observations under Physical Constraints Speaker: Thomas ChabalTeam: WILLOW Slides: [pdf] |

Abstract |

|

December 14th, 2021 |

|

Coordinating a Swarm of Micro-Robots Under Lossy Communication Speaker: Razane Abu-AishehTeam: EVA Slides: [pdf] |

Abstract |

|

November 16th, 2021 |

|

A filter-based search engine powered by machine learning. Speaker: Romain ZimmerTeam: Sievable, Inria Startup Studio |

Abstract |

|

October 19th, 2021 |

|

Statistical models for the minimization of energy consumption in cyber-physical system Speaker: Marwan WehaibaTeam: Kopernic Slides: [pdf] Video recording: [link] |

Abstract |

|

Representations in Random Deep Neural Networks Speaker: Hadi DaneshmandTeam: Sierra Slides: [pdf] Video recording: [link] |

Abstract |

|

May 18th, 2021 |

|

Just Ask: Learning to Answer Questions from Millions of Narrared Videos. Speaker: Antoine YangTeam: Willow Slides: [pdf] Video recording: [link] |

Abstract |

|

March 16th, 2021 |

|

Learning spectro-temporal representation of complex sounds with parameterized neural networks. Speaker: Rachid RiadTeam: Coml Slides: [pdf] |

Abstract |

|

February 16th, 2021 |

|

Abstract |

|

January 19th, 2021 |

|

A New Internet Standard for Hybrid Public Key Encryption. Speaker: Benjamin LippTeam: Prosecco Slides: [pdf] Video recording: [link] |

Abstract |

|

December 15th, 2020 |

|

Practical computation of homogenized coefficients. Speaker: Olga GoryninaTeam: Matherials Slides: [pdf] Video recording: [link] |

Abstract |

|

Feb 18th, 2020 |

|

A Formal Study of the French Tax Code’s Implementation. Speaker: Denis MerigouxTeam: PROSECCO Slides: [pdf] |

Abstract |

|

The Martingale Optimal Transport (MOT) problem. Speaker: William MargheritiTeam: MATHRISK Slides: [pdf] |

Abstract |

|

May 5th, 2019 |

|

Abstract |

|

Abstract |

|

March 19th, 2019 |

|

Abstract |

|

Abstract |

|

February 19th, 2019 |

|

Abstract |

|

Abstract |

|

December 18th, 2018 |

|

Abstract |

|

Abstract |

|

November 11th, 2018 |

|

Abstract |

|

Abstract |

|

October 16th, 2018 |

|

Abstract |

|

Abstract |

|

Jun 19th, 2018 |

|

Abstract |

|

Abstract |

|

April 17th, 2018 |

|

Abstract |

|

Abstract |

|

March 20th, 2018 |

|

Abstract |

|

Abstract |

|

February 20th, 2018 |

|

Abstract |

|

Abstract |

|

December 12th, 2017 |

|

Abstract |

|

Abstract |

|

October 17th, 2017 |

|

Speaker:

Léo Perrin

Team: SECRET |

Abstract |

|

Abstract |

|

June 13th, 2017 |

|

Abstract |

|

Speaker:

Salah Eddine Saidi

Team: AOSTE |

Abstract |

|

May 16th, 2017 |

|

Abstract |

|

Abstract |

|

April 18th, 2017 |

|

Abstract |

|

Abstract |

|

March 14th, 2017 |

|

Abstract |

|

Abstract |

|

February 14th, 2017 |

|

Abstract |

|

Speaker:

Guillaume Terradot

Team: ANTIQUE |

Abstract |

|

December 13th, 2016 |

|

Abstract |

|

Abstract |

|

November 15th, 2016 |

|

Abstract |

|

Abstract |

|

October 18th, 2016 |

|

Abstract |

|

Abstract |

|

June 21st, 2016 |

|

Abstract |

|

Abstract |

|

May 17th, 2016 |

|

Abstract |

|

Speaker:

Yi Yin

Team: MAMBA |

Abstract |

|

April 19th, 2016 |

|

Abstract |

|

Abstract |

|

March 15th, 2016 |

|

Abstract |

|

Abstract |

|

February 16th, 2016 |

|

Abstract |

|

Abstract |

|

November 17th, 2015 |

|

Abstract |

|

Abstract |

|

October 20th, 2015 |

|

Abstract |

|

Abstract |

|

June 16th, 2015 |

|

Abstract |

|

Speaker:

Clément Poncelet

Team: MUTANT Slides: [pdf] — also available in an alternate format Video recording: [link] |

Abstract |

|

May 19th, 2015 |

|

Abstract |

Abstract |

|

April 21st, 2015 |

|

Abstract |

|

Abstract |

|

March 17th, 2015 |

|

Speaker:

Joachim Cohen

Team: QUANTIC Slides: [pdf] — also available in an alternate format Video recording: [link] |

Abstract |

|

Abstract |

|

December 16th, 2014 |

|

Speaker:

Géraldine Cellière

Team: MAMBA Slides: [pdf] — also available in an alternate format Video recording: [link] |

Abstract |

|

Abstract |

|

October 21st, 2014 |

|

Abstract |

|

Abstract |

|

June 17th, 2014 |

|

Abstract |

|

Abstract |

|

April 15th, 2014 |

|

Abstract |

|

Abstract |

|

March 18th, 2014 |

|

Abstract |

|

Abstract |

|

February 13th, 2014 |

|

Abstract |

|

Abstract |

|

January 21st, 2014 |

|

Abstract |

|

Abstract |

|

December 17th, 2013 |

|

Abstract |

|

Abstract |

|

November 19th, 2013 |

|

Abstract |

|

Abstract |

|

October 22nd, 2013 |

|

Abstract |

|

Abstract |

|

June 18th, 2013 |

|

Abstract |

|

Speaker:

Emanuele Leoncini

Team: RAP Slides: [pdf] — also available in an alternate format Video recording: [link] |

Abstract |

|

May 21st, 2013 |

|

Abstract |

|

Abstract |

|

April 23rd, 2013 |

|

Abstract |

|

Abstract |

|

March 26th, 2013 |

|

Abstract |

|

Abstract |

|

February 26th, 2013 |

|

Abstract |

|

Abstract |

|

January 15th, 2013 |

|

Abstract |

|

Abstract |

|

December 14th, 2012 |

|

Abstract |

|

Abstract |

|

November 20th, 2012 |

|

Abstract |

|

Abstract |

|

October 16th, 2012 |

|

Abstract |

|

Abstract |

|

September 18th, 2012 |

|

Abstract |

|

Abstract |

|

June 26th, 2012 |

|

Abstract |

|

Abstract |

|

May 15th, 2012 |

|

Abstract |

|

Abstract |

|

April 10th, 2012 |

|

Abstract |

|

Abstract |

|

March 20th, 2012 |

|

Abstract |

|

Abstract |

|

February 14th, 2012 |

|

Abstract |

|

Abstract |

|

December 20th, 2011 |

|

Abstract |

|

Abstract |

|

November 15th, 2011 |

|

Abstract |

|

Abstract |

|

October 18th, 2011 |

|

Abstract |

|

Abstract |

|

September 20th, 2011 |

|

Abstract |

|

Abstract |